During the Spring Meetings, the Governance Global Practice, the Independent Evaluation Group, and the International Initiative for Impact Evaluation (3ie) co-hosted a lively panel discussion with a provocative title: Why focus on results when no one uses them?

Albert Byamugisha, Commissioner for Monitoring and Evaluation from the Uganda Office of the Prime Minister, kicked off the session with a rebuttal to this question by sharing examples of the Ugandan government’s commitment to using and learning from both positive and negative results. Although this sounds like common sense, it is not always common practice.

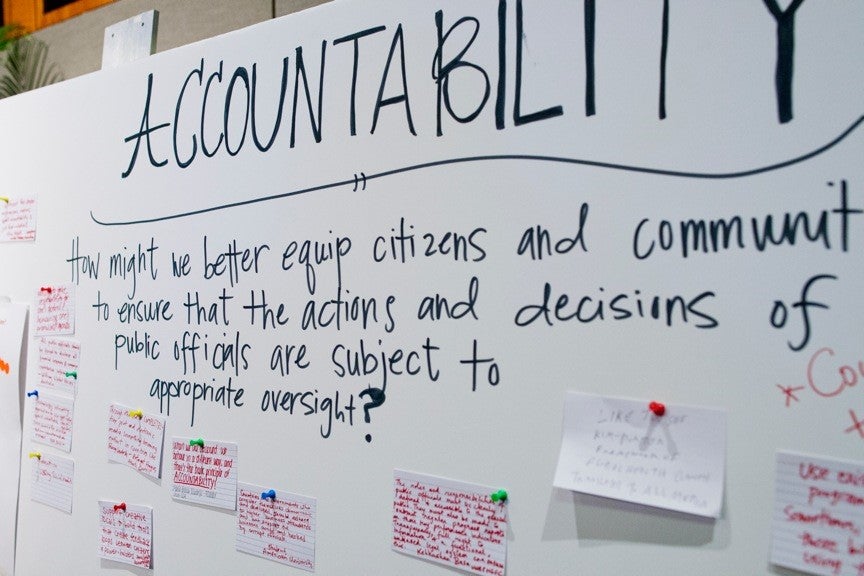

Mr. Byamugisha told us about Uganda’s public fora where citizens hold the government accountable; the two million dollar commitment to collect evaluations- a substantial expenditure for Uganda; and a committee set up with the Ministry of Finance, academia, and the Prime Minister’s office to oversee independent evaluations and formulate policy recommendations that are presented to ministers.

What works? What doesn’t work? Real-time evaluations are used to inform policymakers about what is happening on the ground so they can take action to address problems before they get worse.

I was heartened to hear about the capacity building efforts in Uganda amongst government officials, CSOs, and academia to try to help connect evaluation outcomes and outputs to policy-making and action.

There are a large number of countries where this is simply not happening, and some countries are struggling to get any data or information about program results at all.

In developing countries, we must invest in the infrastructure that can turn raw data into relevant evidence through evaluation. Further efforts are needed to make this evidence available to the multiple actors that can benefit from the knowledge gained, most notably the administrators and policy-makers that are responsible for the formulation and implementation of government programs.

We should also acknowledge that public policies rest on hypotheses rather than infallible laws 99% of the time. There are hardly ever any guarantees that policies on paper will be implemented along a linear path.

If citizens’ security or health is at risk, governments cannot always wait for final proof of concept before taking decisive action. In these cases, evaluations help to test those hypotheses and generate the necessary data to see how those hypotheses hold up.

So how do we design evaluations so that they are truly used and useful for policy making?

-

Be open and keep it simple. Evaluations must be made public and understandable, so that there is pressure to actually use them.

-

Where’s the beef? Do the evaluations contain information that can actually be used by policy makers? If final conclusions for impact and action cannot be made, can we still learn other lessons in the process?

-

Timing is everything. Evaluations should be done and completed at strategic times so that policy makers have the necessary data when they are making decisions. Incomplete but timely information may be more valuable for policymaking than conclusive post-mortems.

-

Make evaluations constructive. Evaluations are more effective when they are seen as a support to get programs right rather than as an inquisitional judgment. People must believe in the power of evaluations so they won’t hide ‘unfavorable’ or ‘negative’ information during the evaluation process.

-

Ask the right questions. When creating evaluations, start by determining what’s needed by policy makers. Identify the relevant research questions first, then methodology and technology can follow.

The World Bank’s Governance Global Practice aims to help governments build this architecture by integrating the evaluation process into the policy making process. We can work with planners, budget offices, and Centers of Government to help connect evaluation architecture to the policy side.

Although we are still developing this area of work, it is critical to our mission and desire to help spark transformational change in developing countries. Without the advantage gained from evaluations, these changes can go either way.

So in response to the question, “Why focus on results when no one uses them?” I would beg to differ with its implicit premise. We do use results, let’s just ensure that they are relevant, timely, and understandable enough to provide useful guidance for action.

Join the Conversation