My oldest child started middle school this year, and I suddenly started receiving emails every other week with updates on his grades. I’d never received anything like this before and was overwhelmed (and a little annoyed) by the amount of information. Someone told me that I could go to some website to opt out, but that seemed like too much work. So I continue getting the emails. And sure enough, now I follow up: “Hey, are you going to speak to your teacher about making up that assignment? Email her if you don’t manage to talk with her today.” (I'm sure my son loves it.) Despite my initial aversion, the emails seem to work.

So I was interested recently to speak with Peter Bergman, a professor at Teachers College, about his paper “ Parent-Child Information Frictions and Human Capital Investment: Evidence from a Field Experiment Investment.” In an experiment with high schools in Los Angeles, researchers provided information to parents about missing assignments and grades, just about every other week. (Bergman noted that especially for immigrant parents, grades were much less useful than missing assignments, as not all parents were familiar with the US’s grading system.) The information was provided via text message, email, or phone calls. 79 percent of parents requested text message, 13 percent requested email, and just 8 percent wanted a phone call. One key finding: “Parents have upwardly-biased beliefs about their child’s effort in school: when asked to estimate how many assignments their child has missed in math class, parents vastly understate.”

What happened? “For high school students, GPA increased by 0.19 standard deviations. There is evidence that test scores for math increased by 0.21 standard deviations, though there was no gain for English scores (0.04 standard deviations). These effects are driven by several changes in students’ inputs: assignment completion increased by 25% and the likelihood of unsatisfactory work habits and cooperation decreased by 24% and 25%, respectively. Classes missed by students decreased by 28%.”

But at what cost? “Contacting parents via text message, phone call or email took approximately three minutes per student. Gathering and maintaining contact numbers adds five minutes of time per child, on average. The time to generate a missing-work report can be almost instantaneous or take several minutes depending on the grade book used and the coordination across teachers. For this experiment it was roughly five minutes. Teacher overtime pay varies across districts and teacher characteristics, but a reasonable estimate prices their time at $40 per hour. If teachers were paid to coordinate and provide information, the total cost per child per 0.10 standard-deviation increase in GPA or math scores would be $156” (as compared to $538 for a recent intervention providing financial incentives for students).

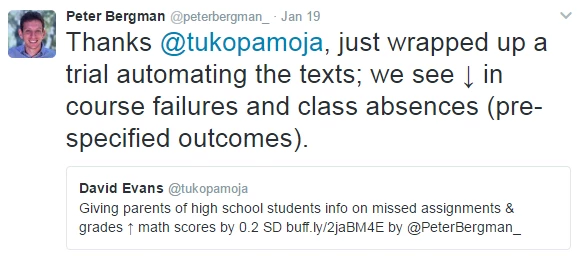

Subsequently, Bergman has been working on a follow-on trial, where the texts are automated (at a marginal cost of 0.2 cents -- or $0.002 per text):

Bergman pointed to other work along these same lines, improving information by providing information to families:

Study #2: Rogers and Feller: “ Reducing student absences at scale by involving families ” (2017)

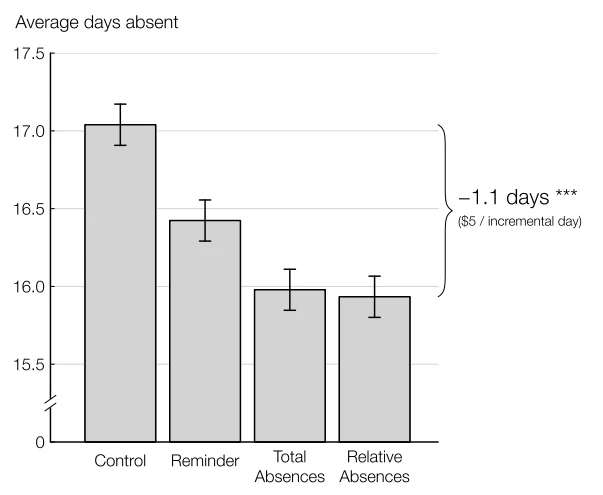

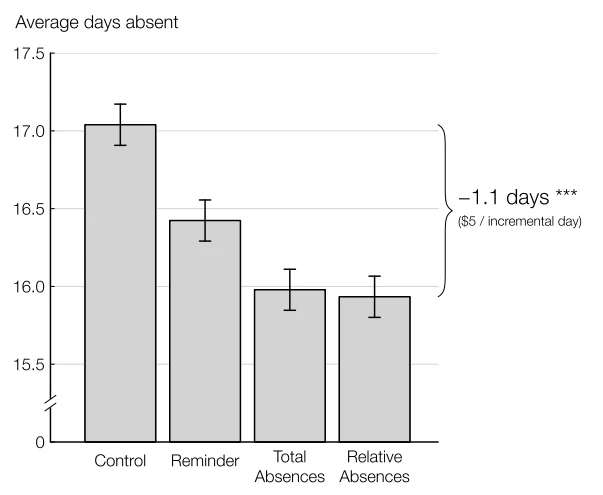

In this study, 28,000 households were randomly assigned to one of three treatments (or business as usual). All treatments were by mail, sent about 5 times per year.

Source: Rogers & Feller 2017

And here’s a tidbit, along the same lines of Bergman’s finding that parents tend to overestimate their children’s effort: “A pilot survey of parents of high-absence students shows that parents underestimated their own students’ absences by a factor of 2 (9.6 estimated vs. 17.8 actual).”

Study #3: Dizon-Ross: “ Parents’ Beliefs and Children’s Education: Experimental Evidence from Malawi ” (2016)

This field experiment in Malawi provides “information to parents with children in primary school about the children’s ‘academic performance’” (i.e., performance on achievement tests).

Dizon-Ross has three main findings:

Finally, Berlinski et al. have an experiment in Chile that “offered each participating parent the chance to receive high frequency information about their selected child via text message (SMS messages).” What did the texts provide? “Attendance, behavior and mathematics test scores of their child. In addition to the information SMS messages, parents of both treatment and control groups received general SMS messages about school meetings, holidays and other general school matters throughout the year.”

What did they find? “The SMS treatment had immediate impacts on grades and on grade progression. After the first four months of treatment (at the end of the first school year), average math grades and cumulative math GPA rise by 0.08 standard deviations for SMS treatment students relative to control students. The probability of earning a passing grade in math increases by 2.8 percentage points (relative to a mean of 90%). Exposure to the SMS treatment increases the chances of attending school for more than 85% of the time by 6.6 percentage points. The 85% cutoff is one of the necessary conditions for grade progression. The share of students reported to have extremely bad behavior (e.g. bullying, or physical/verbal violence) falls by a substantial 1.25 percentage points, or 20% relative to the mean rate of bad behavior. And exposure to treatment increases the probability that a student passes the grade at the end of the year by 2.9 percentage points, virtually eliminating grade failure among marginal students.”

Additional bits:

This also serves as a reminder that there is significant complementary evidence from high-income countries with relevance to middle- and low-income countries. Most reviews of the education literature with an aim to providing lessons for middle- and low-income countries (including mine) focus exclusively on evidence from “ the developing world” or “in developing countries” (exhibits a, b, c, d, and e). While I understand the need to limit scope for practical reasons, such an exclusive focus eliminates highly relevant evidence from the process of supporting evidence-informed policy.

So I was interested recently to speak with Peter Bergman, a professor at Teachers College, about his paper “ Parent-Child Information Frictions and Human Capital Investment: Evidence from a Field Experiment Investment.” In an experiment with high schools in Los Angeles, researchers provided information to parents about missing assignments and grades, just about every other week. (Bergman noted that especially for immigrant parents, grades were much less useful than missing assignments, as not all parents were familiar with the US’s grading system.) The information was provided via text message, email, or phone calls. 79 percent of parents requested text message, 13 percent requested email, and just 8 percent wanted a phone call. One key finding: “Parents have upwardly-biased beliefs about their child’s effort in school: when asked to estimate how many assignments their child has missed in math class, parents vastly understate.”

What happened? “For high school students, GPA increased by 0.19 standard deviations. There is evidence that test scores for math increased by 0.21 standard deviations, though there was no gain for English scores (0.04 standard deviations). These effects are driven by several changes in students’ inputs: assignment completion increased by 25% and the likelihood of unsatisfactory work habits and cooperation decreased by 24% and 25%, respectively. Classes missed by students decreased by 28%.”

But at what cost? “Contacting parents via text message, phone call or email took approximately three minutes per student. Gathering and maintaining contact numbers adds five minutes of time per child, on average. The time to generate a missing-work report can be almost instantaneous or take several minutes depending on the grade book used and the coordination across teachers. For this experiment it was roughly five minutes. Teacher overtime pay varies across districts and teacher characteristics, but a reasonable estimate prices their time at $40 per hour. If teachers were paid to coordinate and provide information, the total cost per child per 0.10 standard-deviation increase in GPA or math scores would be $156” (as compared to $538 for a recent intervention providing financial incentives for students).

Subsequently, Bergman has been working on a follow-on trial, where the texts are automated (at a marginal cost of 0.2 cents -- or $0.002 per text):

Bergman pointed to other work along these same lines, improving information by providing information to families:

Study #2: Rogers and Feller: “ Reducing student absences at scale by involving families ” (2017)

In this study, 28,000 households were randomly assigned to one of three treatments (or business as usual). All treatments were by mail, sent about 5 times per year.

- Reminder treatment: “Reminded parents of the importance of absences and of their ability to influence them.”

- Total absences treatment: “Added information about students’ total absences”

- Relative absences treatment: “Further added information about the modal number of absences among target students’ classmates”

Source: Rogers & Feller 2017

And here’s a tidbit, along the same lines of Bergman’s finding that parents tend to overestimate their children’s effort: “A pilot survey of parents of high-absence students shows that parents underestimated their own students’ absences by a factor of 2 (9.6 estimated vs. 17.8 actual).”

Study #3: Dizon-Ross: “ Parents’ Beliefs and Children’s Education: Experimental Evidence from Malawi ” (2016)

This field experiment in Malawi provides “information to parents with children in primary school about the children’s ‘academic performance’” (i.e., performance on achievement tests).

Dizon-Ross has three main findings:

- Parents are – once again – misled about their children’s performance. “On average, parents’ beliefs about academic performance diverge from true performance by more than one standard deviation of the performance distribution.” Separately, she points to earlier work from Banerjee et al. (2010) in India demonstrating that parents have mistakenly optimistic beliefs about their children’s learning: “38 percent of the parents of the children who could barely decipher letters thought their children could read and understand a story.” So we see the evidence collecting that parents have incorrect information about their children’s effort or performance.

- “Due to inaccurate beliefs, investments are not as well tailored to academic performance as parents would like.” So parents buy books at the wrong level, for example, because they don’t know what level their child is at.

- “Providing information to parents about their children’s academic performance causes them to reallocate.” Notably, some of the reallocations are unambiguously good: For example, parents are more likely to buy the right books for their children. But others are more ambiguous: Parents are more likely to reallocate resources away from lower-performing children toward higher-performing children, reinforcing inequalities in performance.

Finally, Berlinski et al. have an experiment in Chile that “offered each participating parent the chance to receive high frequency information about their selected child via text message (SMS messages).” What did the texts provide? “Attendance, behavior and mathematics test scores of their child. In addition to the information SMS messages, parents of both treatment and control groups received general SMS messages about school meetings, holidays and other general school matters throughout the year.”

What did they find? “The SMS treatment had immediate impacts on grades and on grade progression. After the first four months of treatment (at the end of the first school year), average math grades and cumulative math GPA rise by 0.08 standard deviations for SMS treatment students relative to control students. The probability of earning a passing grade in math increases by 2.8 percentage points (relative to a mean of 90%). Exposure to the SMS treatment increases the chances of attending school for more than 85% of the time by 6.6 percentage points. The 85% cutoff is one of the necessary conditions for grade progression. The share of students reported to have extremely bad behavior (e.g. bullying, or physical/verbal violence) falls by a substantial 1.25 percentage points, or 20% relative to the mean rate of bad behavior. And exposure to treatment increases the probability that a student passes the grade at the end of the year by 2.9 percentage points, virtually eliminating grade failure among marginal students.”

Additional bits:

- “We find evidence of positive classroom level spillovers for grade and attendance outcomes, and for the probability of passing the grade.” [Share of treated kids per classroom was varied experimentally]

- “We show that the frequency of contact matters: the positive effects on school outcomes were larger when treated parents were sent more total messages.” [Not varied experimentally: “The variation in total number of messages sent depended partly on when the school was entered into the treatment, and partly on how often there was updated information (e.g. on recent math grades) received from the schools.”]

This also serves as a reminder that there is significant complementary evidence from high-income countries with relevance to middle- and low-income countries. Most reviews of the education literature with an aim to providing lessons for middle- and low-income countries (including mine) focus exclusively on evidence from “ the developing world” or “in developing countries” (exhibits a, b, c, d, and e). While I understand the need to limit scope for practical reasons, such an exclusive focus eliminates highly relevant evidence from the process of supporting evidence-informed policy.

Join the Conversation