I recently became a co-editor at the World Bank Economic Review, and was surprised to learn how low the acceptance rate is for submitted papers. The

American Economic Review and other AEA journals such as the

AEJ Applied publish annual editor reports in which key information on acceptance rates and review times are made publicly available, but this information is not there for development economics journals. Since I think many of our readers would be interested in this information, I contacted the editors at different journals, and thanks to their cooperation, can share some key information.

In deciding which journal to submit a paper to, three key questions that are often on the minds of authors are 1) Is this a good quality, high visibility journal to publish my work? 2) What are the chances of my paper being accepted?, and 3) How long will they take to review my work? I address each in turn.

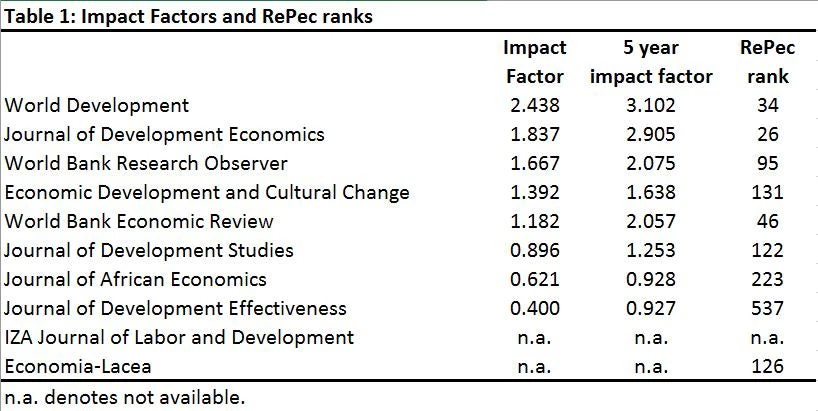

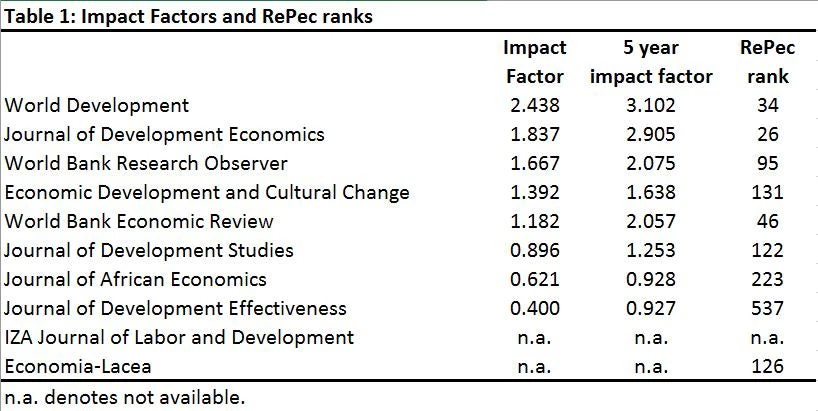

I consider several metrics of journal quality:

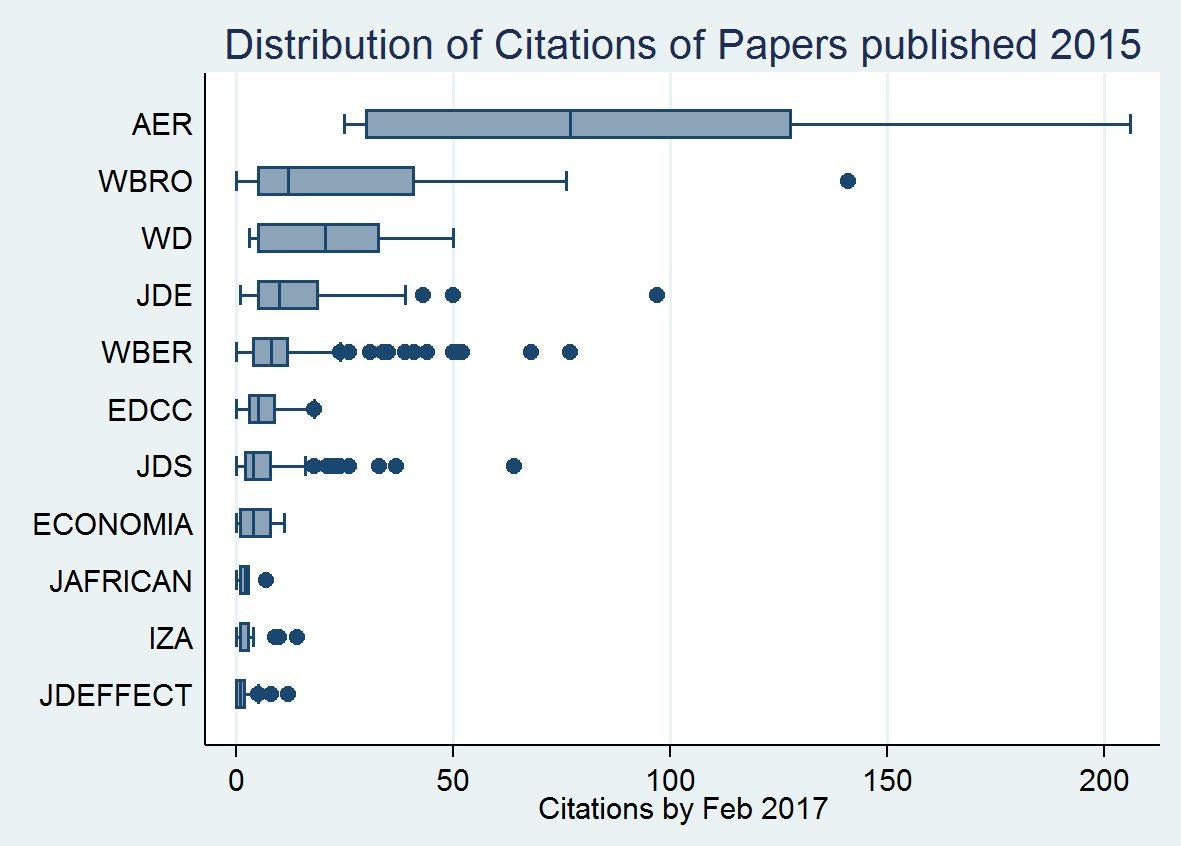

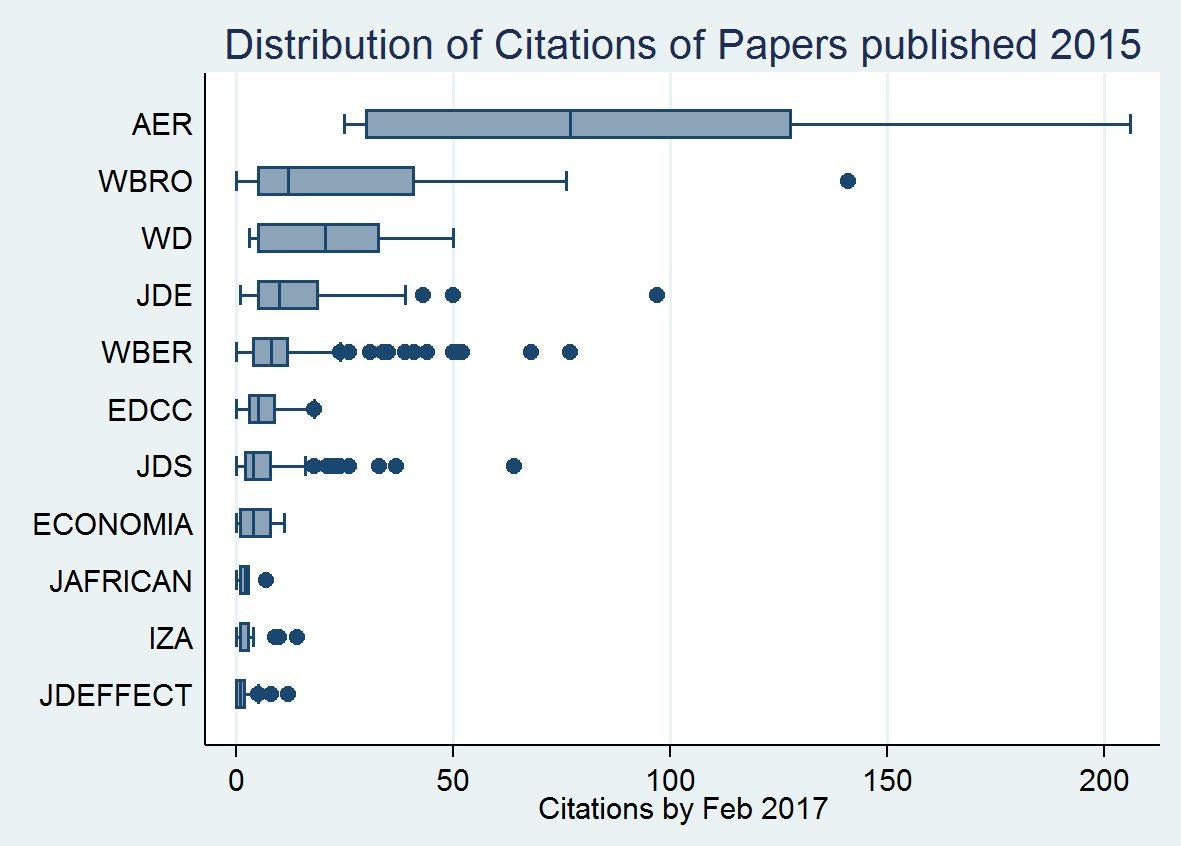

Note how low the impact factors are for all journals, with an average of at most 1-2 citations per article per year. There are several problems with these, including the fact that the means can be skewed by a few outliers, and that the long publication lag in economics makes it take a long time for citations to show up. I therefore took the 2015 issues of each journal, and looked up the Google Scholar citations of each paper published. Figure 1 then gives a boxplot of the data, sorted by mean number of citations.

Figure 1: Boxplot of citations as of February 2017 of articles published in 2015

(notes: I excluded papers and proceedings and the Journal of African Economics conference symposiums, the AER is only for development papers published there and is included as a benchmark, and I took 6 out of 12 issues of World Development as a sample given the large number of papers there).

Three things are key from this figure. First, the number of citations are much larger than the impact figures would suggest for many journals: e.g. the WBER has a mean of 29 and median of 12 citations per article, compared to the impact factor of 1.18. Second, comparing journals based just on mean citations seems an incredibly poor way of assessing the typical quality. The medians can differ substantially from the means, the ranges can be large, and the distributions are skewed. Third, there is a lot of overlap in the distributions (a regression of citations in development journals on journal dummies has an R 2 of 0.18).

2. What are the chances of a paper being accepted?

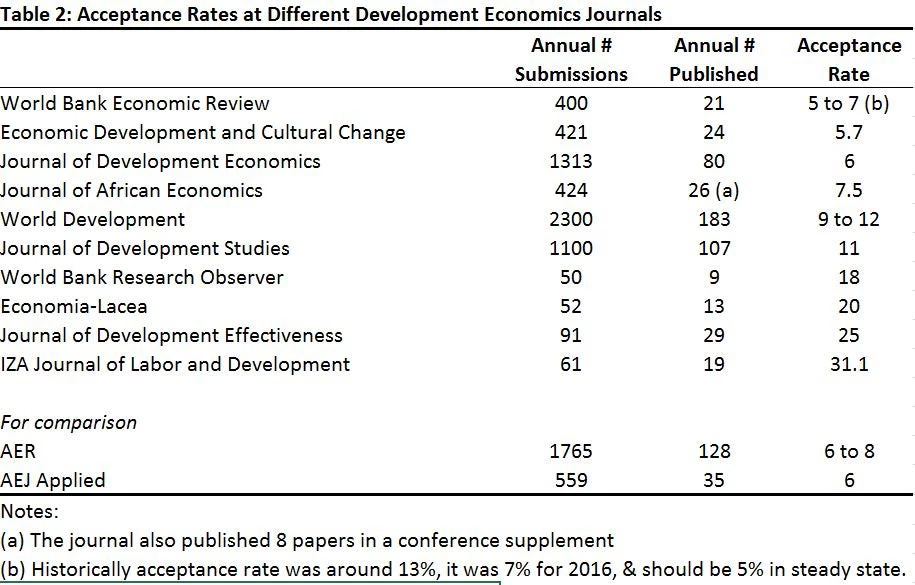

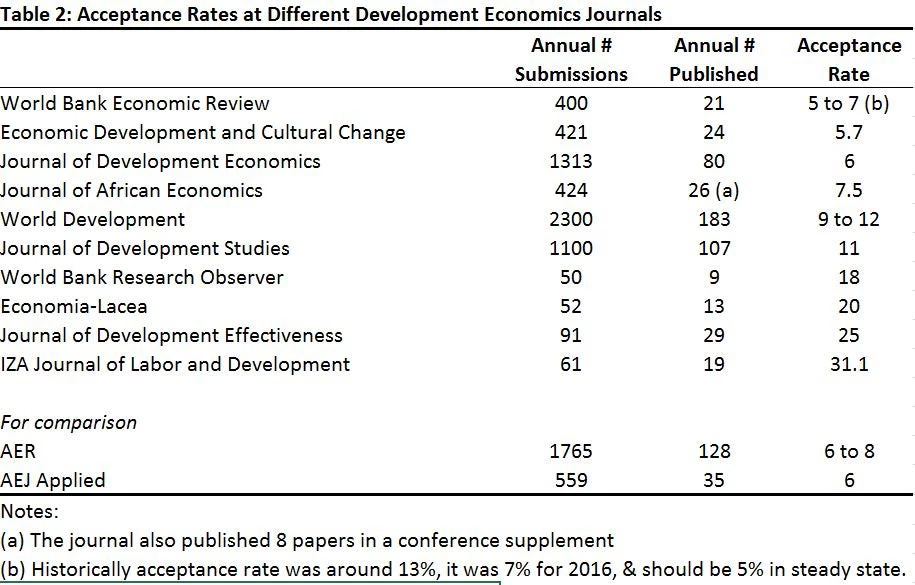

Table 2 reports the number of submissions received by each journal in 2016, the number of papers published, and the acceptance rate. By way of comparison, I also report these for the AER and AEJ applied. I found three things notable in this table: (i) The large number of papers many journals receive as submissions each year: more than a paper every day of the year for the WBER, EDCC and Journal of African Economics, more than 1,000 papers for the JDS and JDE, and more than 2,300 for World Development! This shows the enormous job facing editors, who have a huge number of papers to deal with. (ii) the acceptance rates at the top development journals are as low (5 to 7 percent) as for the AER and AEJ applied. While this doesn’t control for the quality of submissions, I still found the rates lower than I would have thought; (iii) I hadn’t realized before quite the extent of variation in the number of articles published by journals

(note: in data provided Feb 25, 2017: EDCC had acceptance rate of 9% in 2015, and 8.2% for papers submitted in 2016 for which final decisions had been made).

3. How long does the review process take at each journal?

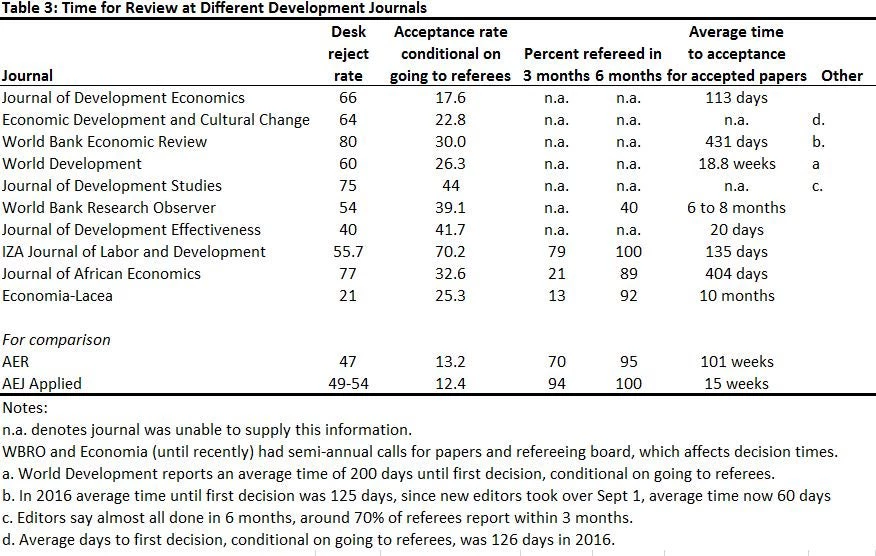

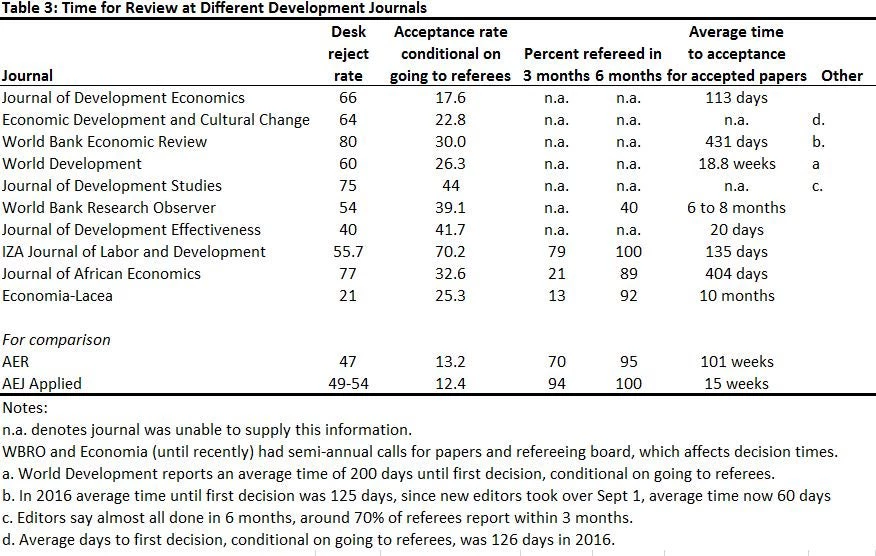

Table 3 reports data on how long journals take deciding on papers. This information was the most difficult to obtain from many journals, and it appears that a number of journals do not carefully track standardized metrics on these. The first piece of information I requested was the desk rejection rate. Given the large number of submissions and low overall acceptance rates at many journals, desk rejections are an efficient way to screen out many papers and render quick decisions on them. We see the desk rejection rates at the top development journals are more than 50 percent, and are actually higher than at the AER and AEJ applied. Using these desk rejection rates, I calculate the approximate acceptance rate conditional on going to referees in the second column – you can see that even if you pass the desk rejection stage, less than one-third of papers sent to referees are accepted at many journals.

(note the table above was updated Feb 25, 2017, to reflect new data provided by EDCC).

Secondly, I wanted to see how long it takes to hear back from journals conditional on them sending papers to referees. The AEA journals report the percent of papers refereed in 3 months and within 6 months, with a goal that most papers should be refereed within 3 months. Few of the development journals appear to track these statistics, and it is only the journals with fewer submissions whose editorial assistants were able to easily calculate these. Another statistic many journals report is average time to acceptance for accepted papers. However, it is not clear that journals are measuring this the same way – I think some journals include only the time the paper is at the journal and not time spent with authors doing revisions, while others include the full time from first submission to final decision. Nevertheless, they do show how slow the process can be by time you go through revisions and refereeing- almost two years at the AER, and a year or more at some development journals.

Of course these outcomes depend heavily on the referees, in addition to what the editors do. I estimate that the journals for which I have data sent 1,960 papers to referees in 2016, which given 2-3 referees per paper, is perhaps 5,000 referee reports! And this doesn’t even include revisions. So pity the poor editors who have to chase up that many reports, and please do your part to referee on time.

Caveats

There are a number of issues that should be kept in mind when considering the above data:

I hope this is useful for our readers – perhaps in making you feel better about a recent rejection, happier about an R&R or acceptance, or in helping decide where to submit. I would love your feedback on whether this is the case, and what could be done to make it even more useful if we do this again in the future. Are there journals I missed, other statistics you would like to know, or anything else?

In deciding which journal to submit a paper to, three key questions that are often on the minds of authors are 1) Is this a good quality, high visibility journal to publish my work? 2) What are the chances of my paper being accepted?, and 3) How long will they take to review my work? I address each in turn.

- Is this a good quality, high visibility journal to publish my work?

I consider several metrics of journal quality:

- The best known is the impact factor of the journal, which is available as both a 2-year and 5-year factor. The standard impact factor is the mean number of citations in the last year of papers published in the journal in the past 2 years, while the 5-year is the mean number of cites in the last year of papers published in the last 5.

- A second measure comes from RePec’s journal rankings which takes into account article downloads and abstract views in addition to citations.

Note how low the impact factors are for all journals, with an average of at most 1-2 citations per article per year. There are several problems with these, including the fact that the means can be skewed by a few outliers, and that the long publication lag in economics makes it take a long time for citations to show up. I therefore took the 2015 issues of each journal, and looked up the Google Scholar citations of each paper published. Figure 1 then gives a boxplot of the data, sorted by mean number of citations.

Figure 1: Boxplot of citations as of February 2017 of articles published in 2015

(notes: I excluded papers and proceedings and the Journal of African Economics conference symposiums, the AER is only for development papers published there and is included as a benchmark, and I took 6 out of 12 issues of World Development as a sample given the large number of papers there).

Three things are key from this figure. First, the number of citations are much larger than the impact figures would suggest for many journals: e.g. the WBER has a mean of 29 and median of 12 citations per article, compared to the impact factor of 1.18. Second, comparing journals based just on mean citations seems an incredibly poor way of assessing the typical quality. The medians can differ substantially from the means, the ranges can be large, and the distributions are skewed. Third, there is a lot of overlap in the distributions (a regression of citations in development journals on journal dummies has an R 2 of 0.18).

2. What are the chances of a paper being accepted?

Table 2 reports the number of submissions received by each journal in 2016, the number of papers published, and the acceptance rate. By way of comparison, I also report these for the AER and AEJ applied. I found three things notable in this table: (i) The large number of papers many journals receive as submissions each year: more than a paper every day of the year for the WBER, EDCC and Journal of African Economics, more than 1,000 papers for the JDS and JDE, and more than 2,300 for World Development! This shows the enormous job facing editors, who have a huge number of papers to deal with. (ii) the acceptance rates at the top development journals are as low (5 to 7 percent) as for the AER and AEJ applied. While this doesn’t control for the quality of submissions, I still found the rates lower than I would have thought; (iii) I hadn’t realized before quite the extent of variation in the number of articles published by journals

(note: in data provided Feb 25, 2017: EDCC had acceptance rate of 9% in 2015, and 8.2% for papers submitted in 2016 for which final decisions had been made).

3. How long does the review process take at each journal?

Table 3 reports data on how long journals take deciding on papers. This information was the most difficult to obtain from many journals, and it appears that a number of journals do not carefully track standardized metrics on these. The first piece of information I requested was the desk rejection rate. Given the large number of submissions and low overall acceptance rates at many journals, desk rejections are an efficient way to screen out many papers and render quick decisions on them. We see the desk rejection rates at the top development journals are more than 50 percent, and are actually higher than at the AER and AEJ applied. Using these desk rejection rates, I calculate the approximate acceptance rate conditional on going to referees in the second column – you can see that even if you pass the desk rejection stage, less than one-third of papers sent to referees are accepted at many journals.

(note the table above was updated Feb 25, 2017, to reflect new data provided by EDCC).

Secondly, I wanted to see how long it takes to hear back from journals conditional on them sending papers to referees. The AEA journals report the percent of papers refereed in 3 months and within 6 months, with a goal that most papers should be refereed within 3 months. Few of the development journals appear to track these statistics, and it is only the journals with fewer submissions whose editorial assistants were able to easily calculate these. Another statistic many journals report is average time to acceptance for accepted papers. However, it is not clear that journals are measuring this the same way – I think some journals include only the time the paper is at the journal and not time spent with authors doing revisions, while others include the full time from first submission to final decision. Nevertheless, they do show how slow the process can be by time you go through revisions and refereeing- almost two years at the AER, and a year or more at some development journals.

Of course these outcomes depend heavily on the referees, in addition to what the editors do. I estimate that the journals for which I have data sent 1,960 papers to referees in 2016, which given 2-3 referees per paper, is perhaps 5,000 referee reports! And this doesn’t even include revisions. So pity the poor editors who have to chase up that many reports, and please do your part to referee on time.

Caveats

There are a number of issues that should be kept in mind when considering the above data:

- A number of the journals have recently changed editors, and so the statistics here may reflect business under a former editorial team. Indeed, several of the new editors expressed their desires to improve the refereeing and decision process under their leadership. There was even a request to make this an annual state of development journals so progress could be shown.

- Another issue which I haven’t addressed is the time between acceptance and publication in a numbered volume. In some of the younger journals this gap is very small, whereas it can be a year or more at other development journals with publication backlogs. This matters when considering citation rates since publication, since a journal which accepts and publishes an article quickly has less time for it to gather citations than a journal which takes a long time to review the paper and then has the paper forthcoming for over a year before it is published.

I hope this is useful for our readers – perhaps in making you feel better about a recent rejection, happier about an R&R or acceptance, or in helping decide where to submit. I would love your feedback on whether this is the case, and what could be done to make it even more useful if we do this again in the future. Are there journals I missed, other statistics you would like to know, or anything else?

Join the Conversation