Guest post by Dean Karlan and Jacob Appel

Dean has failed again! Dean and Jacob are kicking off our series on learning from failure by contributing a case that wasn’t in the book.

I. Background + Motivation

Recent changes in the aid landscape have allowed donors to support small, nimble organizations that can identify and address local needs. However, many have lamented the difficulties of monitoring the effectiveness of local organizations. At the same time as donors become more involved, the World Bank has called for greater “beneficiary control,” or more direct input from people receiving development services.

While attempts have been made to increase the accountability of non-profits, little research addresses whether doing so actually encourages donors to give more or to continue supporting the same projects. On the contrary it may be that lack of accountability provides donors with a convenient excuse for not giving. It could be that donors give the same amount even with greater accountability. Furthermore, little research indicates whether increased transparency and accountability would provide incentives for organizations to be more effective in providing services. Rigorous research will help determine the impact of increasing accountability, both on the behavior of donors and on the behavior of organizations working in the field.

II. Study design

IPA partnered with Global Giving, an online marketplace that directly connects donors with grassroots projects in the developing world, on a program that invited clients of local organizations to provide feedback about the services they receive by sending SMS messages to Global Giving. The client feedback would in turn be sent to potential donors.

We were interested in two research questions: (1) Would inviting clients to give feedback change the way organizations provide services? (2) Would seeing clients’ feedback make potential donors more (or less) likely to give? To answer the first question, we identified 37 organizations in Guatemala, Peru, and Ecuador, and randomly assigned half to implement SMS client feedback. To answer the second, we randomly assigned those organizations’ donors to receive a solicitation email that featured either (i) no client feedback, (ii) generic examples of client feedback, or (iii) specific SMS messages the clients had sent.

III. Implementation plan

IPA and Global Giving held meetings with all the organizations involved to explain the study. For those organizations assigned to implement SMS feedback, IPA also planned to meet with beneficiaries to introduce the SMS system and invite their participation.

IV. What went wrong in the field + consequences

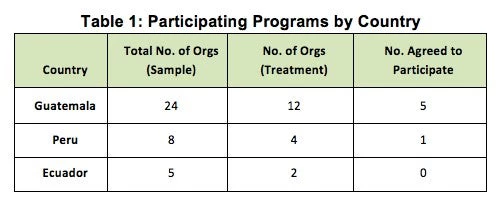

More than half the organizations assigned to implement the SMS feedback program refused to do so (see Table 1):

With so few organizations ultimately participating, our sample was compromised. We had insufficient statistical power to detect whether SMS feedback had an impact on service delivery or donor activity.

VI. Lessons learned & remediation

It was instructive to hear some key reasons organizations chose not to participate:

- Concern about the potential for negative feedback from beneficiaries and the effect that feedback would have on donor activity. Although these organizations are offered the opportunity to review all feedback received about their projects and are provided the opportunity to respond to this negative feedback, only one organization that cited negative feedback as a concern agreed to participate.

- Organizations that are involved in sensitive work, such as work with street children or medical surgeries, see the program as insensitive and unable to capture the essence of their work and would not resonate with donors.

- Some organizations perceived the program as requiring too many resources (time, effort etc.), although in many cases, especially those organizations with well-defined target beneficiaries, the program requires little if no resources on their part.

As far as remediation, we considered ways to further entice organizations to participate. A first thought was to make participation compulsory. However, we feared that many organizations would simply leave Global Giving rather than take part. In addition, it is less likely that organizations compelled to participate would be equally cooperative as those participating voluntarily. Another strategy would leave participation voluntary but would publicly list those organizations that chose not to join. While such a strategy could weaken an organization’s relationship with Global Giving, it would likely also persuade organizations to join as the decision may be linked to financial losses due to a reduction in donations.

Ultimately, due to funding gaps, we were unable to try out these strategies to boost participation by organizations, and so the project stopped.

In retrospect, we might have benefited from a stronger pilot that demonstrated benefits to participating organizations (e.g., increased donations or donor satisfaction). But for those that did not rely heavily on Global Giving for donations, it would be hard in any case to justify the time and resource investment needed to participate in the study; their greatest enticement would have to be the actual value of client feedback. Alas, such organizations may have had more efficient ways of gathering that feedback.

Failure Tags

#LowParticipation

Reminder that we are looking for contributions that share impact evaluation failures, see the end of David’s book review.

Join the Conversation