In recent decades, the amount of data produced has exploded, generating boundless opportunities for policies to improve people’s lives. Though data can be valuable in their raw form, the full value of data is only realized when they are analyzed to create insights, and these insights are converted to public policies or increased accountability. Academic research is a major source of these insights.

But how can we tell if countries are getting the most of the data they produce through academic research? This is what we try to answer in our new working paper, "Missing Evidence: Tracking Academic Data Use around the World."

One approach we could have taken to answer this question would be to hire a team to sift through the millions of academic articles that have been published in recent decades, classify what country the article is about and whether the article is using data. We took this approach for 3,500 articles. Assume it takes around 10 minutes to scan an article and check whether it uses data. To classify 1 million articles would require more than 167,000 hours of review time and more than US$3 million in costs. This exercise would need to be repeated over time to track how countries are performing over time.

Instead, we used recent advances in machine learning and applied Natural Language Processing (NLP) to do the work. For a one-time cost of around US$30,000, we were able to train and test a machine learning model to predict whether academic articles make use of data with high accuracy. The correlation between the number of articles using data based on the NLP model and the number based on human raters was around 0.99.

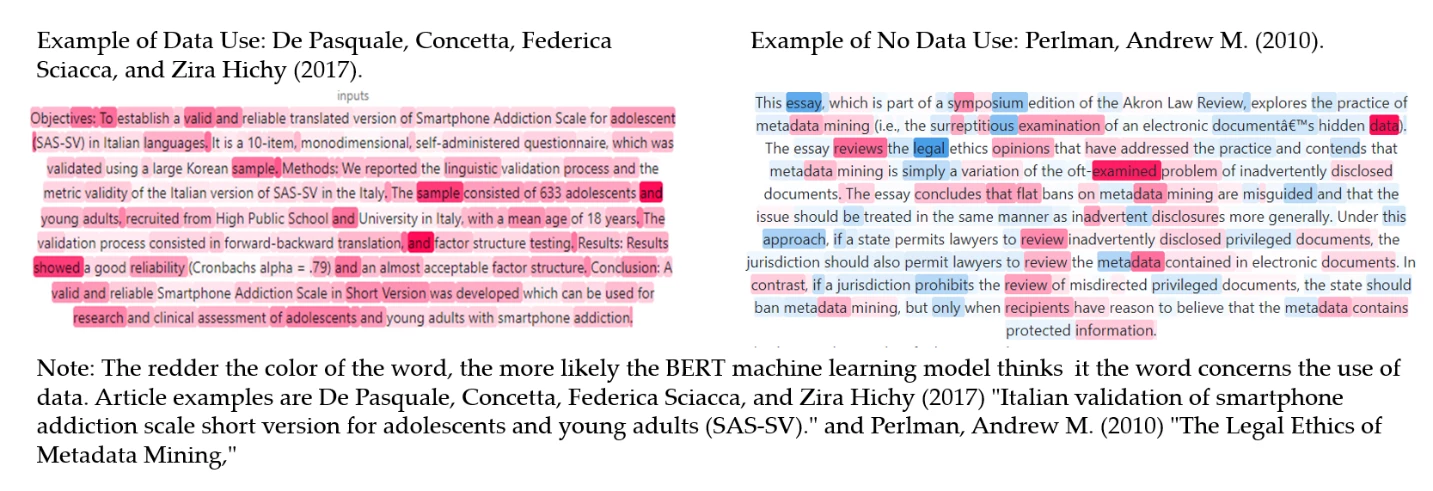

First, the country of origin was classified using the machine learning SpaCy library and through searches of the country name in the abstract of articles. Then using the trained machine learning model, we were able to categorize articles based on whether they likely used data. In the randomly drawn examples below (highlighted in dark red), several key words such as “sample”, “showed”, as well as numeric data in the abstract was indicative of data use in the first article. The second article, despite discussing issues related to data, was correctly classified as not using data.

Now what did we do with this data? Applying these tools to a corpus of over 1 million articles, we were able to estimate the number of articles using data for countries for each year between 2000 and 2020. Scroll down to explore our findings.

How can this data be used by countries? By matching this data with data from the World Bank’s Statistical Performance Indicators (SPI), we were able to group countries into:

- Data Deserts, which had low data supply and little data research;

- Data Swamps, with lots of data available according to the SPI, but wasn’t generating much research;

- Data Oases, countries with little data available, but managed to produce a large amount of research using data;

- and Data Lakes, countries with both high data supply and high data research.

Using this information, for instance, countries can consider whether they should prioritize producing more data or foster stronger use of existing data, such as by improving data services to connect academics to the data or improve data literacy in the country.

Looking for the data and code? All of the data and code are available as open access. Going forward, we plan to update these counts of research using data at the country level on an annual basis. The hope is that countries, academics, and other stakeholders can use this information to improve their data systems and ultimately create new insights to improve people’s lives.

Join the Conversation